AI has limits, even if many AI people can't see them

On Ben Recht's fantastic new book

Towards the end of his new book, The Irrational Decision, Ben Recht explains what he has set out to do.

Most books on technology either take the side that all technology is bad, or all technology is good. This isn’t one of those books. Such books focus too much on harms and not enough on limits. Limits are more empowering. Throughout the book, I’ve maintained that mathematical rationality is limited in what kinds of problems it is best placed to solve but has sweet spots that have yielded remarkable technological advances.

It may be that more books on technology escape the good-bad dichotomy than Ben allows. Even so, I haven’t read another book that is nearly as useful in explaining why and where the broad family of approaches that we (perhaps unfortunately) call AI work, and why and where they don’t. Ben (who is a mate) combines a deep understanding of the technologies with a grasp of the history and ability to write clearly and well about complicated things. I learned a lot from this book. Very likely, you will too.

The good-bad dichotomy that Ben describes does indeed shape a whole lot of our current debate around “mathematical rationality” and AI. Regarding the first, Nate Silver’s book On The Edge argues for the kinds of Bayesian rationality that Silicon Valley people like to talk about. It praises the “River” of people who think about the world in terms of statistical probabilities, which you update whenever new information becomes available. As Ben suggests in a separate review essay with Leif Weatherby, the “River” wraps professional poker, rationalist thinking about AI, sports betting and crypto bro philosophizing together into a single package that appears sort-of-coherent, and even perhaps brilliant, if you don’t look at it too closely. As Ben suggests, rationalists of this persuasion tend to assume that “computers can make better decisions than humans,” and are often fervent cheerleaders for AI (Silver, in fairness to him, isn’t nearly as fervent as some others). Other books, like Emily Bender and Alex Hanna’s The AI Con, begin from just the opposite assumption: that most of what we call “AI” is hype. Bender and Hanna tell us that if we start poking around behind the grand spectacle and booming voice of “mathy math,” we will find the rather unimpressive wizard of machine learning, who is actually only capable of fancy spell-check, telling radiologists which parts of an image they might want to take a look at, and other such “well scoped” activities.

Neither AI Rationalism or AI-Con Thought is all that helpful in explaining the technologies we confront right now. The former tends to launch into fantasy, repeatedly demonstrating how starting from ridiculous premises allows you to reason your way to ridiculous results. The latter tends to curdle into denialism, claiming ever more loudly that disliked technologies are useless even as they find ever more uses. We ought to be much more worried about the claims of the triumphalists than the denialists, since they are far more influential. But to successfully deflate their claims, we need a more grounded perspective on what AI and related technologies are capable of than can be provided by the denialists.

The Irrational Decision provides strong reasons for skepticism about the grander aspirations of the rationalist project, while explaining why machine learning has remarkable uses in its appropriate domain. Those who are embroiled most closely with the rationalist project have a hard time understanding its limits because those limits shape their own world view. The one weird trick of rationalism is to recompose complex problems in terms that can readily be rationalized. When that is good, it is very, very good, but when it is bad, it is horrid. To understand this, it’s first necessary to understand where rationalism comes from.

*******

Much of the discussion of The Irrational Decision is historical. It reaches back to the 1940s and 1950s to figure out where rationalism actually comes from, providing a short history that is a little like what Erickson et al’s How Reason Almost Lost Its Mind might have been if it focused more on statistics and operations research than economics. Ben’s aim in all of this is to identify how ‘mathematical rationality’ came to be a relatively coherent set of ideas about how we might better organize society.

The story he tells is necessarily messy, but some important broad themes emerge, most importantly around the development of optimization theory. Linear programming makes it possible to find optimal ways to allocate resources within a limited budget so long as the constraints are linear (when they are not, all computational hell can break loose). Optimal control theory allows a control system to adjust optimally to its environments (again, under restrictive assumptions about the constraints). Game theory can postulate - and often even discover - optimal strategies to play against opponents in strategic situations. These toolkits overlap with others. A family of techniques, ranging from simulated annealing to the ancestral forms of the gradient descent/backpropagation that “deep learning” relies on, provides ways to discover superior local optima in more complex situations. Randomized clinical trials (RCTs) provided possible ways to discover whether a given intervention (a drug; a policy measure) worked or not.

All of these approaches suggest the superiority of technical forms of analysis over human judgments. RCTs apply protocols and statistical analysis to try to discover causal relationships (according to the standard story), or justify interventions (according to Ben’s). Other approaches involve the discovery of optimal solutions, given convenient mathematical assumptions and simplifications. Others still involve the discovery of local optima (that is: solutions that are better than others that are readily visible in their neighborhood), which may be better than those that ordinary humans could reach.

Rationalist approaches are very powerful in their domains of proper application, but you need some sense of what those domains are. Ben suggests that there is a “sweet spot” for many or most computational tools. For example, statistics is not useful for situations where a treatment always works (why would you need complicated tools of inference), or where outcomes are too variable and unpredictable, but for the messy zone between the two. When you hit the space where your tools have traction on reality despite their imperfections, you can accomplish extraordinary things. For example, in his own review of the book, Dan Davies talks about

the incredibly productive feedback loop between “optimisation algorithms are really demanding in terms of computer processing” and “optimisation algorithms are really useful for designing better and faster computers”.

As Ben describes it, designers were able to reduce the incredibly complex challenges of chip design into an optimizable task through making simplifying assumptions, about “standard cells” and combining them with simulated annealing algorithms that could discover optima that would otherwise not be easily visible. This, then, as per Dan, allowed faster chips to be developed, which in turn could run more powerful algorithms, and so on, in a loop.

But treating rationalism as a universal tool of discovery is problematic, especially given that these techniques are characteristically limited or start from implausible simplifying assumptions. Daphne Koller, one of the researchers who Ben describes, discovered some startlingly effective ways to reduce the complexity of poker so that it became more nearly “solvable.” But Koller eventually abandoned the study of game theory:

“Understanding the world around us is more important than understanding the optimal way to bluff,” she told me. In her experience, when she needed to model people in simulations of complex systems, modeling their decisions as random got her 90 percent of the way to a solution. How to best make decisions under wide-ranging uncertainty was far less cut-and-dried. For Koller, once you stepped away from the game board and had to make decisions in reality, understanding uncertainty and the myriad ways it could arise and impact plans was more important than strategy.

As it turned out, poker algorithms too generated feedback loops, not through simplifying chip design, but simplifying human beings (C. Thi Nguyen’s book, The Score provides a broader account of how this works). There is an important sense in which optimal poker theory was less successful in optimizing poker than in optimizing poker players, inspiring a style of play in which professionals “started memorizing expected value tables from poker solvers so that they could play ‘game theory optimal’ in big poker tournaments.” Perhaps that can be described as an improvement in human affairs. I’m not seeing it myself.

*******

Understanding mathematical rationalism helps us understand the strengths and limitations of AI. It isn’t just a form of rationalism, but the combined application of a variety of long established rationalist techniques - neural nets (which go back to the 1950s), statistical learning and backpropagation, made possible by more powerful computers and enormous amounts of readily available data. Claude Shannon’s methodology for modeling language, which is the intellectual basis of “large language models” is “an instance of statistical pattern recognition” or machine learning. And machine learning itself is no more and no less than a powerful statistical tool. I found this passage maybe the most clarifying explanation of what it does that I’ve ever read.

To frame the prototypical machine learning problem, I like to think about a hypothetical spreadsheet. Each row of the spreadsheet corresponds to some unit or example. But I don’t care what the units mean. I just know that I have a bunch of columns filled in with data. And I’m told one of the columns is special. I am about to get a load of new rows in the spreadsheet, but someone downstairs forgot to fill in the special column. Management has tasked me with writing a formula to fill in what should be there. For whatever reason, I don’t get to see these new rows and have to build the formula from the spreadsheet I have. The formula can use all sorts of spreadsheet operations: It can assign weights to different columns and add up the scores, it can use logical formulas based on whether certain columns exceed particular values, it can divide and multiply. … I’ll do an experiment. I’ll take the last row of my spreadsheet and pretend I don’t have the special column. I’ll write as many formulas as I can. … But why single out that last row? I can do something similar for every row! I’ll invent a set of plausible functions. I’ll evaluate how well they predict on the spreadsheet I have. I’ll choose the function that maximizes the accuracy. This is more or less the art of machine learning.

Guessing the missing rows of spreadsheets and optimizing turns out to have a lot of useful applications: not just language models, but protein folding, recognizing handwriting and a myriad of other applications. Equally, machine learning is just another form of optimization and/or prediction. Very large chunks of Silicon Valley’s current business model involve taking complex situations that don’t look like optimization or prediction problems, simplifying and redescribing them and then finding solutions.

Just like statistics, there is a “sweet spot” for machine learning. It is not useful for situations where you have a genuinely clean mathematical abstraction, which you can turn into running code. Nor is it useful for situations that are too messy or complicated to be predictable (it is, after all, an application of statistical technique). You want to use it in the intermediary situations where there isn’t an obvious neat solution, but where the clunky and computationally expensive techniques of machine learning can discover a useful approximation, even if you may not understand quite what it is based on or how it works.

*******

All this implies some important problems of evaluation. How can you tell where machine learning is a useful way to proceed? How can you tell which machine learning approach is the best one to apply for a given problem? And behind all this lurks the bigger question that we began with. How can you tell when machine learning techniques in general (or other rationalist shortcuts) are better or worse than ordinary human judgment?

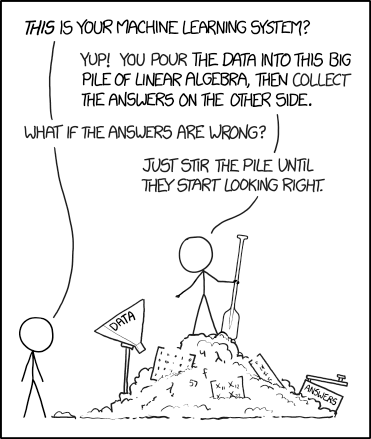

The answer to the first is unfortunately indeterminate. As best as I understand Ben’s argument, the only real way to discover whether machine learning works for a given kind of problem is to come up with a working machine learning solution. There is no genuinely satisfactory ex ante way to distinguish between the problems that machine learning can solve for, and those that they can’t. Furthermore, as Ali Rahimi and Ben have noted elsewhere, AI practitioners rely more on “alchemy” than a deep understanding of why some approaches work and others don’t. More succinctly, XKCD:

As for how to tell which machine learning algorithms work better than others, computer scientists have come up with an approach commonly called the Common Task Framework (or variants thereon). Create a common dataset (canonically: photos of cats and dogs) and share all (or far more usually these days) some of it with different teams of researchers. Then come up with a common task that can be performed on the data and can evaluated in a fairly straightforward way (can the algorithm distinguish between cats and dogs). Then the different teams can come up with algorithms that work competitively against each other, which perhaps can be tested on data that has not been shared publicly, to ward against overfit and teaching to the test. The algorithm which works best (say; has the highest percentage accuracy in distinguishing between cats and dogs) is, ipso facto, the best algorithm for the task.

And this gets us to one of the major contributions of Ben’s book. A lot of people in AI claim that we can apply this framework to answer a very big question. Are AI algorithms generally superior to human beings at performing some set of cognitive tasks? There are a variety of common task framework tests that purport to do this, some with names that … beg questions. If you are hanging around the right (or wrong) places on the Internet, you will regularly read this or that excitable claim that humanity is doomed to be superseded because of the performance of AI on this or that test.

Ben suggests that such claims tend to make a fundamental error. He describes some famous results from the research of psychologist Paul Meehl on medical and other decisions, which suggested that “statistical prediction provided more accurate judgments about the future than clinical judgments” under certain conditions. But the conclusion that Ben comes to is not that this means that statistical prediction is generally better than expert judgment. Instead, it is better when there are clearly defined outcomes, good data, and clear reference cases that can be used for comparison. There are many situations in which this is not true, and cannot readily be made true.

If we use common task type approaches to measure success, we are loading the dice in favor of those tasks that can be described in terms of clear outcomes, and tested with good data, and loading them against those tasks that do not have such nice characteristics. Ben describes this even more pungently. Tasks that can be defined in those ways are definitionally the tasks that computer or other automated approaches will be able quickly to do better than human beings. Paradoxically:

If we can measure why humans might be able to outperform machines, then we can build machines to outperform people. On the other hand, if we can’t cleanly articulate a clean set of actions, outcomes, measurements, and metrics, then we can’t mechanize problem solving. It is this digitization, translating the world into the language of the computer, that is needed to automate.

The universe of tasks with clear goals, conditions and data is both the universe of tasks that are easily measured and the universe of tasks that computers and automated processes can carry out well. The one characteristic more or less predicts the other. This, then, is what makes it so hard for mathematical rationalists to see the limitations to their perspective. The tools and measures that they use to understand and solve problems could almost have been purpose crafted to confirm their broad intellectual biases by concealing the problems that their methods can’t easily solve.

*******

This helps us to situate the debate that is happening right now about AI. There are many AI enthusiasts, who believe that it can be applied to do pretty well any task that humans can do as well as the humans or better. Getting to this is just a matter of scaling and engineering, and is going to happen Real Soon Now. There are AI skeptics, who argue that its benefits are limited to a narrow range of well defined tasks, or even (I see the claim regularly, though it is rarely defended in any particularly sophisticated way) that the benefits are non-existent. These positions often map onto “AI good” and “AI bad,” along the lines that AI suggests.

As per the quote at the beginning of this post, Ben doesn’t really engage with the question of whether AI is good or bad in any general sense. Instead, he proposes that it can carry out many tasks, including tasks that we might not anticipate right now, but that there are limits. AI, like mathematical rationality more generally, has a sweet spot: problems that are complicated enough that they can’t be solved by other computationally cheaper approaches, but that have enough regularities to be workable. Within that sweet spot, it can do extraordinary things. Outside the sweet spot, it may be redundant or completely useless. And there is an ambiguous zone in between, where it can do stuff but imperfectly.

It isn’t possible, except in very general terms, to define ex ante what the sweet spot is. Clever engineers are perpetually trying to expand it. Self-driving cars provide one example of a problem that has proved far harder to solve than engineers thought (as Ben puts it “we don't know how to articulate 'good driver' into a clean statistical outcome”), but they are brute-forcing the problem so that self-driving is far more plausible across different environments than it used to be. Equally, there are many, many edge cases. One way to deal with many of them might be to try to simplify them out of existence through e.g. having only self-driving cars without the unpredictabilities of idiosyncratic human drivers, or cyclists, or … or … or). Such simplification is a version of what management cyberneticists call ‘variety reduction.’

Equally, there are challenges that appear to be fundamentally resistant to mathematical rationality, including bureaucracy and politics:

societies are not computer chips. While I noted in chapter 2 that computer chips were often analogized as microscopic cities, chips were always designed to be hermetically sealed and perfectly controlled. This is what made them optimizable. Real societies, on the other hand, had people. While it’s convenient to model and view the population, its health, and its market flows as mathematical abstractions, these run into the limits of the messiness that people bring to bear.

In The Sciences of the Artificial, Herbert Simon makes a closely related argument:

When we come to the design of systems as complex as cities, or buildings, or economies, we must give up the aim of creating systems that will optimize some hypothesized utility function, and we must consider whether differences in style of the sort I have just been describing do not represent highly desirable variants in the design process rather than alternatives to be evaluated as “better” or “worse.” Variety, within the limits of satisfactory constraints, may be a desirable end in itself, among other reasons, because it permits us to attach value to the search as well as its outcome—to regard the design process as itself a valued activity for those who participate in it.

We have usually thought of city planning as a means whereby the planner’s creative activity could build a system that would satisfy the needs of a populace. Perhaps we should think of city planning as a valuable creative activity in which many members of a community can have the opportunity of participating—if we have wits to organize the process that way.

As per James Scott’s Seeing Like a State, the problems begin when technocrats begin to treat human beings and the complex societies they create as though they were simplified “standard cells” that can readily be re-arranged in more optimal patterns. Moreover, as Ben says elsewhere (Cosma and I quote this in our own forthcoming piece on AI and bureaucracy), political disagreement generally resists optimization. When you have incommensurable tradeoffs (even very simple ones: should you use money in your budget to pay for a playground to make parents happy or a fire station to make it less likely that businesses will burn down), you have moved decisively away from the kinds of problems that machine learning, or optimization more generally, can simplify in useful ways.

As soon as we can’t agree on a cost function, it’s not clear what our optimization machinery … buys us. Multi-objective optimization necessarily means there is a trade-off. And we can’t optimize a trade-off.

Barring the development of radically different approaches, there is no reason to believe that politics will come into the sweet spot. But many mathematical rationalists argue otherwise (e.g. this set of claims, which maybe deserve their own extended response). If you want to really understand the limits on AI, you owe it to yourself to read Ben’s book. There are many books on technology that are smart in some sense, but very few that are wise. This is one of those very rare exceptions.

The danger is not that AI can optimize everything. It’s that institutions may increasingly restructure reality so more domains become optimizable.

"...or that excitable claim that humanity is doomed to be superseded because of the performance of AI on this or that test". We're doomed to be superseded because that's what the purveyors and profiteers are pushing for.

Human judgement can't really be measured, much less optimized. Finding those areas outside the "sweet spot" of AI optimization is where the remaining (hopefully gainful) employment will be found.