Our Future Is Being Devoured By Feral Thought Experiments

Here's a chance to start taking it back.

I haven’t been writing as much here over the last several weeks, because of multiple other writing commitments. Some of these are about to start bearing fruit. Substack posts will get easier now that the semester is coming to a close, but in the meantime, here’s a short piece to tide you over, and an opportunity for those as wants to take it up.

One of the pieces that is forthcoming (co-authored with Cosma Shalizi) relates indirectly to the theme of this Elias Isquith post. Elias draws a comparison between how AI figures like Altman and the bizarre homespun philosophy of Anton Chigurh, the killer in the movie, No Country for Old Men.1

in both cases, we have men positing that they have a unique grasp of the nature of the universe and the direction of human history. And at the risk of stating the obvious: This is not a small claim!

In another era, in another culture, this hubristic assertion of having discerned the golden path to the inevitable future would be grounds for charges of heresy or blasphemy. The egomania here is astounding, no less so than that of a character who fancies himself Fate (and/or Death) incarnate.

And in both cases, we are confronted with false prophets who are telling us, albeit in different registers, that human superfluousness is either already true (Chigurh) or inevitable (Altman). In the face of such monstrous certainty, despair would be understandable.

I think that it is worth drawing out a difference between Chigurh and Altman that is hinted at in Elias’s indirect Dune reference. Chigurh’s philosophy is a cockeyed version of determinism, suggesting that we don’t have real choices going forward. Altman is drawing on a much stranger set of notions, which suggests that our present is not determined by our past, or by chance, but by a radically constrained version of the future. A lot of AI discourse reverses the arrow of time, so that instead of a fixed past and an indefinite future, we face a definite future, which directly or indirectly shapes its own past.

This follows from standard arguments about the Singularity. People who subscribe to middling-to-stronger versions of Singularity thinking believe that we are about to hit a massive phase-change in human history, which will have a dichotomous outcome. Once strong AI hits, either the machines master us, or we master the machines. The result is to render present concerns largely irrelevant, except insofar as our collective decisions make us more likely to follow the one path or the other. The AI 2027 paper provides a fine example of the genre.

And it is a genre; a concatenation of LessWrong posts, academic and sort-of-academic articles, think-tank thought pieces, Substack posts and the like, which not only build on a similar set of tropes, but also (and here is the point I want to emphasize) adopt a narrative structure that interprets the present only in terms of some posited near term future of radical transformation. It’s notable how significant elements of the mythology of the Singularity - things that people in this space don’t usually fully believe, but often indirectly affects their thinking - describes direct intervention by some future that rearranges the past so as to ensure that it comes into being. Here, most obviously, Roko’s Basilisk (more generally; when one considers the collective fate of humanity as an exercise in applied game theory, backward induction may lead you to some very strange conclusions).

This famous quote from Nick Land is even more on point:

what appears to humanity as the history of capitalism is an invasion from the future by an artificial intelligent space that must assemble itself entirely from its enemy’s resources.

The obvious rebuttal is no: we actually can’t see the future of AI. We can’t even engage in the limited kinds of hedged predictions that we can make when we model reasonably well understood phenomena. The question, “How technology will develop in the future?” is an open ended one.. If innovation were predictable in its consequences, like the tech tree in Civilization, we’d be in a very different kind of history altogether than the history we are in.

What we can see are the outcomes of thought experiments. Thought experiments can be very useful as a means of thinking more systematically about unknowns. However, one shouldn’t overestimate what you can do with them. In the end, thought experiments are nothing other than moderately disciplined guesswork. When we mistake them for reliable predictions of what lies ahead, and reshape our world around what they say, we’re liable to end up in a mess, unless we are improbably brilliant, lucky, or both.

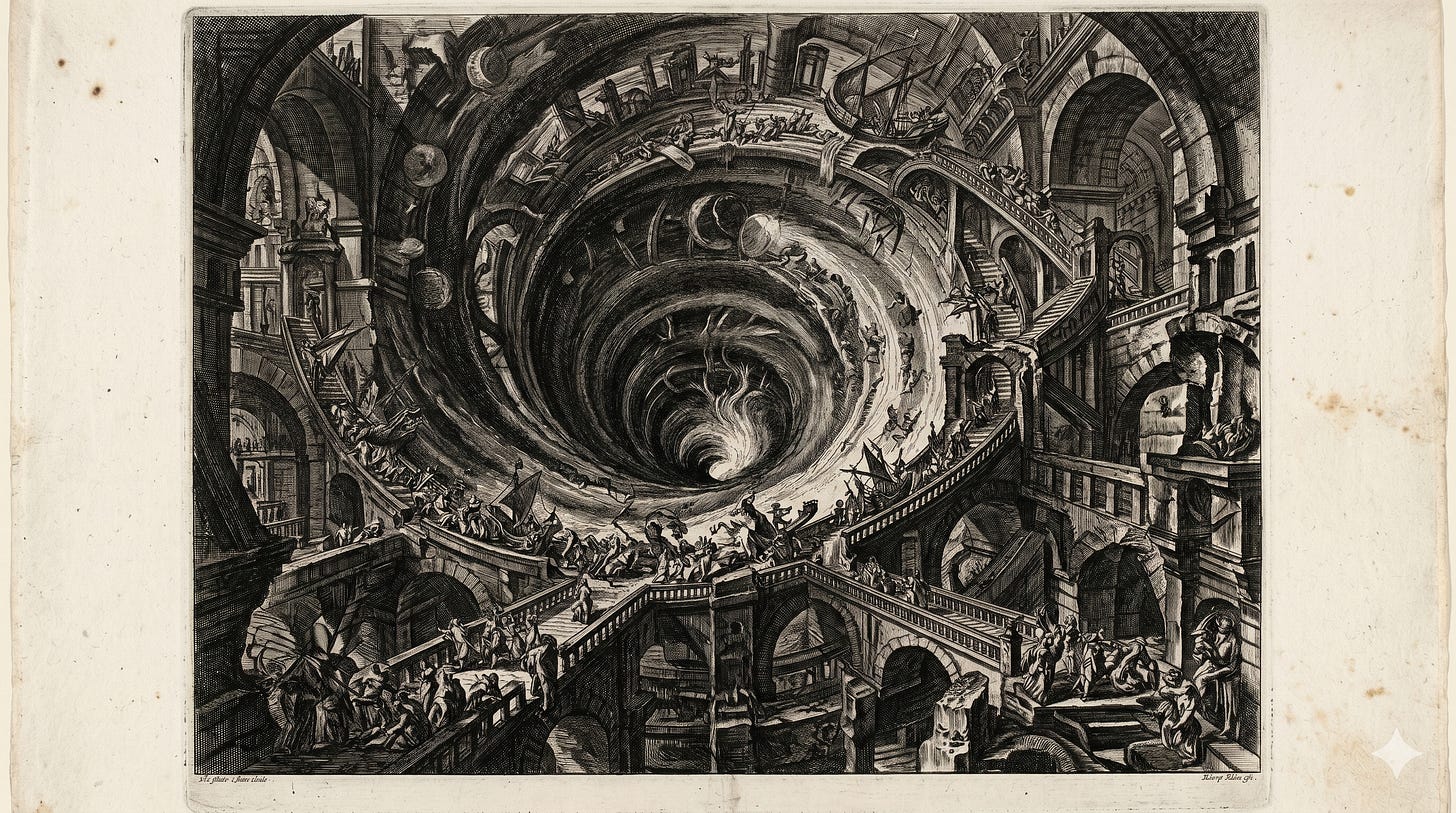

But we are in a world where many people - including very important policy makers - see a particular strain of thought experiments as being determinative. I don’t think that these people are stupid or wicked, but I am frustrated with how their arguments are driving out other ways of thinking (that may finally be changing now that concerns about economic disruption are coming to the fore, but it is changing much more slowly than I would like). That makes it much harder to see the enormous variety of futures that might be possible, depending on the choices we make and their consequences, both predictable and stochastic. Those possible futures are being devoured by thought experiments which have gone feral, and have spread like an invasive species from their proper environment into the realm of general discourse. That stunts democratic debate and understanding of the choices we do and don’t have.

Some version of this complaint informs the opening parts of a jointly written paper with Cosma Shalizi that should be out soon. You can plausibly read this classic post of his as yet another version of this complaint, which was aimed at an earlier version of this discourse. If we see the Singularity as having happened in the past, we can better understand the ways in which it is contingent, rather than extrapolating the eschaton from growth charts.

Enough griping: here’s the positive opportunity. The Protopian Prize competition for short fiction that imagines a democratic future is going to open up tomorrow. There will be a $5,000 prize for the winning entry, and I’m going to be one of the judges. While I wasn’t even slightly involved in setting up the prize, and the bit that I am concerned with is not about AI, the competition will surely generate a variety of different possible futures, all of which will start from an understanding of how or whether we can collectively steer towards one direction or another. That kind of steering, is, after all, what democracy involves. I don’t have any further particulars beyond what is on the website, and absolutely don’t want to suggest that people should write in one or another vein (the variety of possibilities seems to be the point) but do encourage you to enter. As per Elias’s post, I would like fewer thought experiments about how we have no or very few choices to make, and more thinking about how our future is not constrained to one or the other narrowly defined path.

Which is based on Cormac McCarthy’s novel. Which in turn, takes its title from Yeats’ Sailing To Byzantium, a great poem that has weird but striking application to current AI debates.

Great piece. I think so much tech determinism is a rehash of eschatology with a thermodynamics branding - which they then dispense with when it suits. The retrocausality thing is case in point.

Tiny correction: “Sailing TO Byzantium”.